NIST’s New AI Profile Signals a Shift in Cyber Risk

NIST’s New AI Profile Signals a Shift in Cyber Risk

AI systems don’t behave like traditional software. Their outputs can vary, they adapt over time, and they are deeply intertwined with data, models, agents, and external services. As organizations embed AI into their operations and adversaries adopt it to scale their attacks, the foundational logic behind traditional cyber risk models begins to change.

AI changes how systems behave.

AI changes how threats evolve.

AI changes how cyber risk must be managed.

The National Institute of Standards and Technology’s new Cyber AI Profile (NIST IR 8596) formalizes that shift.

Rather than replacing existing frameworks, it layers AI-specific priorities onto the NIST Cybersecurity Framework 2.0, recognizing that AI is now both part of the attack surface and part of the defense stack. Here’s what it means in practice.

The Three Pillars: Secure, Defend, Thwart

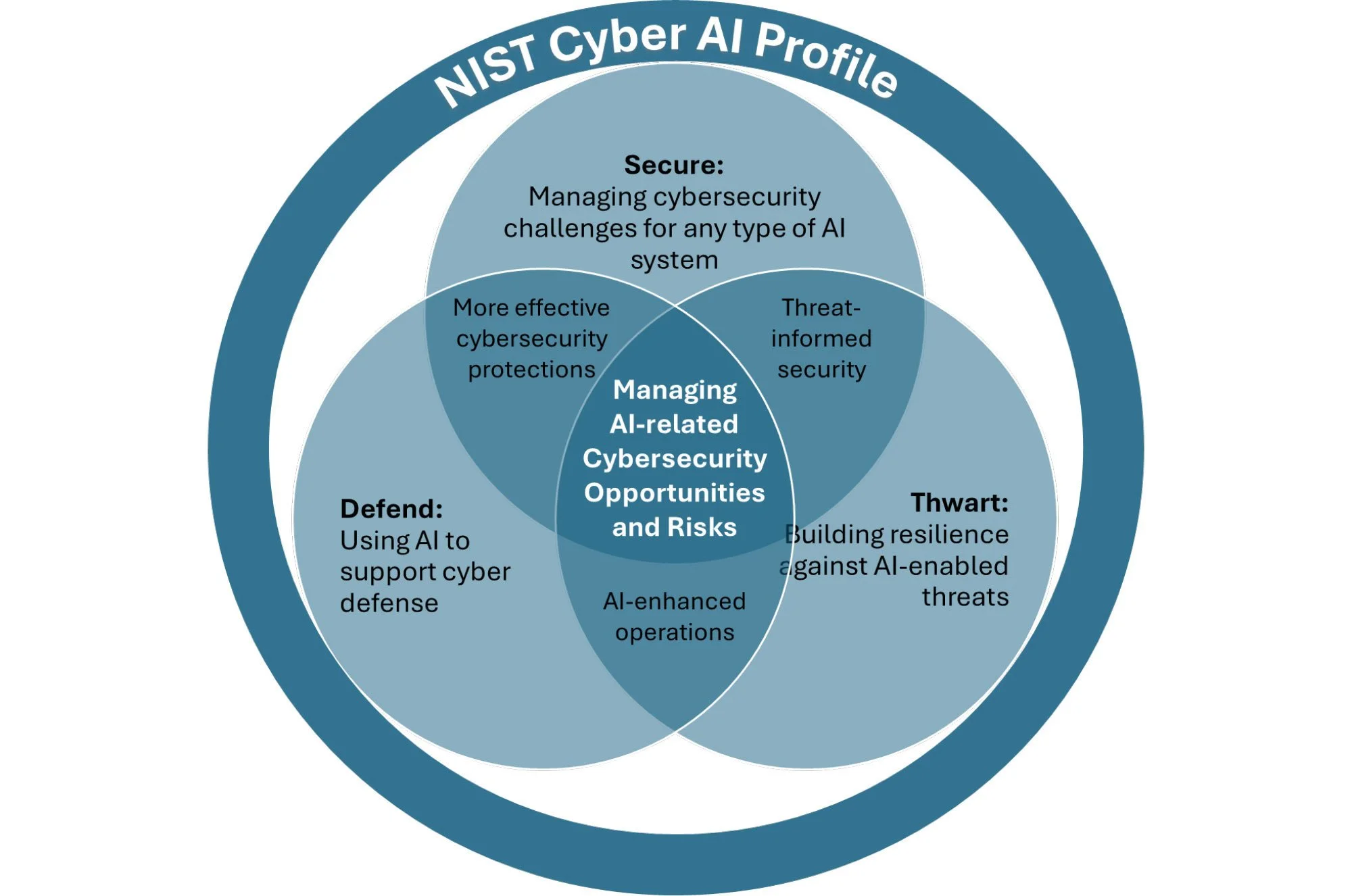

At the center of the Cyber AI Profile are three core facets: Secure, Defend, and Thwart. Together, they provide a practical framework for evaluating AI-related cybersecurity risk.

Secure

Secure focuses on protecting AI systems and their components, such as models, agents, prompts, training data, and supporting infrastructure.

As AI becomes embedded in enterprise workflows, these elements expand the attack surface and introduce new vulnerabilities. Risks include:

Model manipulation

Prompt injection

Unintended data exposure

Securing AI is not just about infrastructure hardening. It requires visibility into how models are trained, updated, accessed, and integrated into operational workflows.

Defend

Defend addresses the use of AI to strengthen cybersecurity operations.

Here, AI functions as a force multiplier by supporting detection, triage, investigation, automation, and reporting. The goal is not simply to add AI into workflows. It is to achieve measurable improvement in speed, consistency, and scalability across threat detection, investigation, and response (TDIR) functions.

The key question becomes: Does AI improve outcomes, or simply accelerate existing inefficiencies?

Thwart

Thwart turns outward by recognizing that adversaries are adopting AI as well.

From AI-assisted phishing and automated reconnaissance to increasingly adaptive malware development, attackers are leveraging generative and automated capabilities to increase speed, scale, and personalization. In one recent report, 82.6% of phishing emails exhibited some use of AI.

Organizations must prepare for a threat landscape where automation and generative capabilities are available to both sides.

Relationship Between NIST Cyber AI Profile Focus Areas

These three pillars reflect the reality that AI is entering organizations from different directions.

Some enterprises are actively deploying AI internally and must prioritize securing those systems. Others may not yet rely heavily on AI but are already facing AI-enabled threats. Many are exploring AI to enhance their defenses.

In practice, however, no organization can remain siloed within a single pillar. Over time, every enterprise will need to address all three.

Visually, the AI Cyber Profile is structured as three overlapping circles. The overlap between Secure and Thwart, described as threat-informed security, highlights the need to design and harden AI systems with adversarial behavior in mind. The intersection of Thwart and Defend, framed as AI-enhanced operations, reflects the dynamic where AI is used to counter AI-driven threats.

The Cyber AI Profile remains in draft form, but it signals a durable shift. AI-specific realities are now being integrated into mainstream cyber risk management.

What the Cyber AI Profile Means for Security Leaders

What stands out is the operational emphasis.

The guidance moves beyond high-level governance themes and into day-to-day cybersecurity operations. The focus is less on abstract principles and more on how AI systems perform under real-world conditions.

AI is no longer a distant policy concern or an innovation lab experiment. It is embedded in production systems, influencing decisions, interacting with security tooling, and reshaping the threat landscape in real time.

The standards-setting community is responding accordingly by integrating AI-specific realities into the core of cyber risk management.

For security leaders, that shift raises practical questions:

If AI components are now part of the attack surface, how are models, agents, prompts, and training data inventoried, monitored, and tested under existing CSF-aligned controls?

Where should limited security budget be allocated first: securing internally deployed AI systems, enhancing detection with AI, or preparing for AI-enabled adversaries?

If AI is embedded in detection and response workflows, how is its performance measured against meaningful TDIR outcomes — not just speed, but accuracy, containment, and resilience?

If adversaries are using automation to accelerate reconnaissance, phishing, and exploit development, how is threat modeling evolving to reflect that velocity?

If AI reduces security workload, how is that reduction measured, and is capacity being reinvested into higher-order capabilities like threat hunting and detection engineering?

Traditional controls such as asset inventories, patch management, and access governance remain necessary in an AI world. But they are not sufficient.

AI introduces behavioral variability, opaque decision paths, and feedback loops that demand deeper validation and more adaptive oversight.

AI initiatives may originate in innovation teams or data science groups. The security implications, however, land squarely with the CISO. The Cyber AI Profile model makes clear that AI-related investment can’t be siloed. Security leaders must balance defensive modernization with workforce development and adversarial preparedness, even as cost pressures persist.

The Role of Simulation

One of the more concrete signals in the draft Profile appears in its discussion of thwarting AI-enabled attacks:

“Modeling and simulation scenarios may also need to be developed to facilitate effective detection, response, and recovery efforts.”

That language matters.

It reflects an understanding that AI-enabled threats can’t be addressed through awareness training or policy documents alone. Security teams must experience how AI-assisted phishing, chatbot manipulation, and automation-driven attacks behave in practice. And, they need to know how defensive systems respond under pressure.

That is the role of live-fire simulation.

On a cyber range, live-fire simulations place both human teams and AI systems inside realistic enterprise environments where models, agentic SOC workflows, and defensive processes can be exercised side by side. Performance can be measured. Failure modes can be identified. Supervision requirements and operational blind spots become visible before production exposure.

This kind of simulation serves all three pillars of the Cyber AI Profile:

Secure: Exposing AI systems and supporting workflows to adversarial techniques before production deployment

Defend: Evaluating whether AI-enhanced triage, enrichment, or response automation improves measurable outcomes

Thwart: Preparing teams with realistic exposure to AI-enabled attack patterns that evolve faster than static playbooks

As organizations align with the Cyber AI Profile, controlled adversarial testing environments will become an increasingly important part of cyber risk management.

Cloud Range provides a live-fire cyber range environment where organizations can simulate AI-enabled attack scenarios, benchmark AI-enhanced defenses, and evaluate how models and agents behave under realistic pressure before they influence production decisions.

If AI is becoming part of your attack surface and your defense stack, the critical question is no longer whether it is being adopted. It is whether it has been validated under realistic conditions.

Learn how Cloud Range supports AI validation and operational readiness.