What Needs to Change in Cyber Risk Management and Why

What Needs to Change in Cyber Risk Management and Why

Many organizations invest significant time and effort into cyber risk management. That includes dashboards, tracking findings, keeping risk registers, mapping controls to frameworks, and reporting progress to leadership.

None of that is inherently wrong. In fact, much of it is necessary.

The problem is that these activities can create the appearance of control without improving the organization’s ability to withstand a real attack.

The recent Gartner® report, “Maverick: Cyber-Risk Management Is Governance Theater That Doesn’t Strengthen Cyber Defense,” captured this tension directly. As the authors write:

“Cyber-risk management became a parallel bureaucracy. We replaced attack reality with scoring, artifacts, and approvals. Cyber risk is documented and signed off on, but rarely early enough to change decisions. Governance maturity improved, but cyber defense did not.”

The Illusion of Control

The real issue is not that organizations measure cyber risk. It’s how they define it.

Controls, metrics, and governance signals are useful, especially for consistency, oversight, and accountability. However, they do not necessarily explain how an attacker would gain access, move laterally, escalate privileges, or disrupt operations.

It’s a bit like judging a fire department by the condition of its trucks rather than by how well the crew performs during an actual fire.

Gartner puts it bluntly:

“Cyber-risk management is paperwork, not proof. Risk is defined by what’s missing from frameworks, not by what would fail under attack.”

The “Waterline” Problem

In the visual below from Gartner, cyber risk is depicted as an iceberg. Above the waterline are the items leaders commonly review: heat maps, maturity scores, risk registers, and dashboards. Below the surface are the factors that more directly determine whether an attack succeeds, including real attack paths, identity and privilege dependencies, control behavior under pressure, and friction in detection and response.

This is not an argument against executive summaries. Leaders need a clear view of complex issues. The problem begins when the visible layer becomes a substitute for the underlying reality.

Attackers are not navigating your dashboard. They are navigating your environment.

The Hard Part We Avoid

The reality is that validating exposure is harder than reporting on cyber risk.

Gartner reinforces what that harder work actually looks like:

“Exposure validation requires engineers who can emulate, simulate, or at least understand how attacks actually unfold in the environment and prove which controls fail under pressure.”

One of the most effective ways to validate exposure is to place teams in realistic attack scenarios within a cyber range that mirrors enterprise architecture and security tooling. Then you can test how controls behave under pressure and observe how people actually respond when conditions are moving quickly and not everything is clear. The results are rarely neat, but once gaps are identified, they can be directly addressed.

What Actually Changes Outcomes

At its core, cyber risk management is supposed to influence decisions about whether to proceed, delay, redesign, or accept exposure. It should clarify trade-offs between speed, cost, and survivability.

That only works when cyber risk is part of the decision process early enough to matter. As the Gartner report authors note,

“Risk enters after architectures are set and vendors are chosen. Committees confirm process, not direction.”

When review happens after major choices are already made, cyber risk becomes far less effective. It is easier to document exposure than to redesign architecture, revisit vendor selections, or change delivery timelines after commitments are in place.

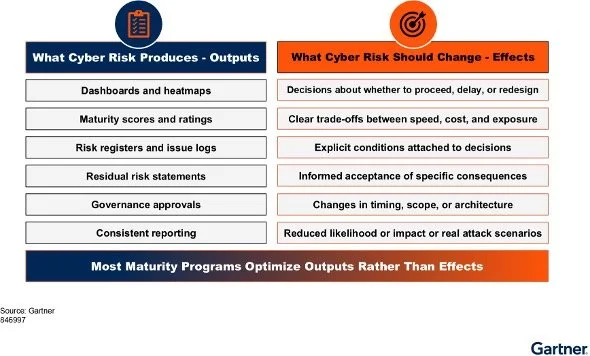

Many programs also optimize for outputs rather than outcomes (see chart below). The focus should be on what changes decisions and reduces real exposure.

When an attack happens, the issue is not whether the documentation was complete. It’s why the design held up on paper but failed in practice.

Where This Leaves Security Leaders

Governance, frameworks, or reporting are necessary, but they are not sufficient on their own.

Why? Because cyber risk can’t just be described. It has to be understood in the context of how attacks actually unfold in your environment and how your team performs when it matters. That requires seeing it play out, not just mapping it to a control.

How Cloud Range Fits

There is a disconnect between how cyber risk is reported and how it plays out in a real attack. Most organizations have visibility into control gaps and maturity scores, but far fewer have a clear, tested understanding of how an attacker would move through their environment or how their team would respond under pressure.

That’s where Cloud Range comes in.

Cloud Range gives organizations a way to simulate real attack scenarios in a controlled environment, observe how those attacks unfold, and see how teams, processes, and technology perform in real time. It moves the conversation from assumed exposure to demonstrated behavior, helping security leaders make decisions based on what actually happens, not just what’s documented.

It’s not about replacing governance. It’s about grounding it in evidence.

If you’re not confident you can clearly show how an attack would unfold or how your team would respond, it’s worth taking a closer look.

See how Cloud Range helps organizations validate cyber readiness under real attack conditions.

Gartner, Maverick: Cyber-Risk Management Is Governance Theater That Doesn’t Strengthen Cyber Defense, by Matt Hager, Lauren Kornutick, Sam Olyaei, Paul Proctor, Katell Thielemann, Andrew Walls, 23 March 2026. Gartner is a registered trademark and service mark of Gartner, Inc. and/or its affiliates in the U.S. and internationally and is used herein with permission. All rights reserved.